In this guide, you’ll learn what AgentOps is, why it matters, and the AgentOps principles, lifecycle, and core capabilities that enable AI agents to run autonomously, safely, and with clear accountability in production. You’ll also learn how to apply AgentOps to Salesforce Agentforce—transforming AI agents from experiments into governed, auditable enterprise assets leaders can trust.

Introduction to AgentOps

AgentOps is the discipline that operationalizes, governs, and scales autonomous AI agents in the enterprise. Just as DevOps brought structure and reliability to software delivery, AgentOps brings trust, control, and accountability to AI systems that can act, decide, and execute on the business's behalf.

At its core, AgentOps is built on simple principles: transparency, governance by design, controlled autonomy, least-privilege access, auditability, and measurable value. When applied to Salesforce Agentforce, these principles translate into agents that operate through native permission models, Flow-based controls, Shield auditability, metadata-driven governance, and structured change management.

Without AgentOps:

- AI agents remain pilots, demos, or shelfware.

- Risk teams block production deployment.

- Leaders lack confidence in Agentic AI outcomes.

- Accountability is unclear or non-existent when agents fail.

With AgentOps:

- AI agents become auditable, observable, and governable.

- Enterprises move from experimentation to tangible value.

- Autonomous systems adhere to policy, ethics, and controls.

What is AgentOps?

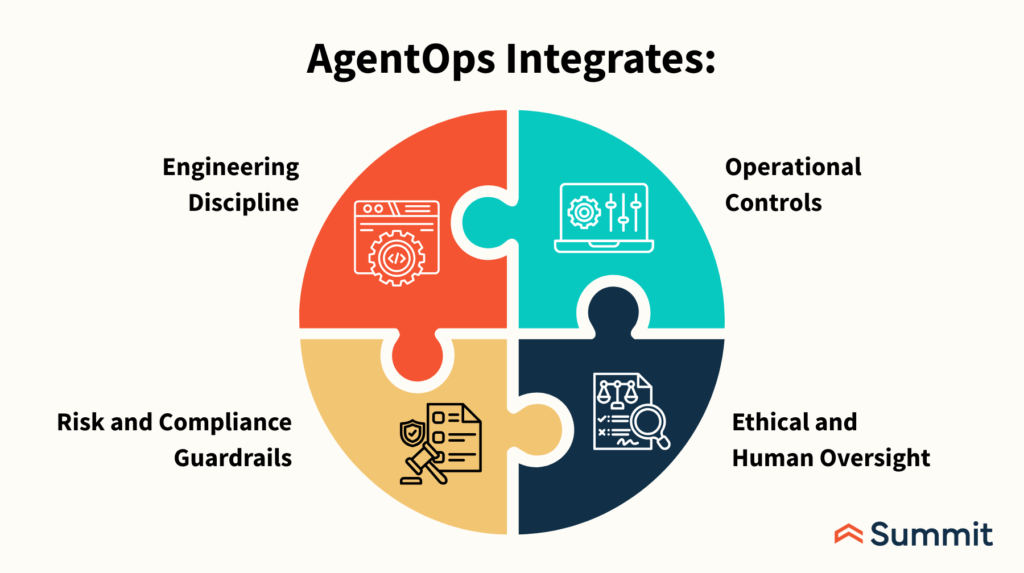

AgentOps is a cross-functional operating model that governs how AI agents are designed, deployed, monitored, and continuously improved across their entire lifecycle, bringing together engineering discipline, operational controls, risk and compliance guardrails, and ethical human oversight.

AgentOps answers the next-generation question: how do we allow AI to act autonomously while remaining safe, responsible, and accountable? This evolution is inevitable. Every major technology wave has moved from innovation to chaos to discipline, from CMMI and ITIL in the software era, to cloud governance, to DevOps for Agile delivery.

AI agents represent the next inflection point: they are the first enterprise systems capable of independent, non-deterministic action, introducing new forms of operational and autonomous risk that demand a formal, enterprise-grade operating model. AgentOps is that discipline.

AgentOps integrates:

- Engineering discipline

- Operational controls

- Risk & compliance guardrails

- Ethical and human oversight

AgentOps is rapidly emerging as a foundational discipline for organizations deploying AI agents at scale. As the AI agents market accelerates toward an estimated $50 billion by 2030, the need for robust operational practices around agent behavior, agent performance, and multi agent systems has never been greater.

Every major technology shift follows the same arc.

If DevOps answers “How do we ship software safely and fast?” AgentOps answers “How do we enable AI to act safely and responsibly?” AgentOps is not optional, it’s Inevitable.

|

Era |

Innovation | Chaos |

Discipline |

| 1990s | Software | Production instability | CMMI / ITIL |

| 2000s | Cloud | Security & sprawl | Cloud governance |

| 2010s | Agile | Quality & predictability | DevOps |

| 2020s | AI Agents | Autonomous risk | AgentOps |

AI agents are the first enterprise systems that can act independently without hard-coded logic. That brings AgentOps closer to operations and governance than traditional engineering.

Why AgentOps Exists

AgentOps exists because AI agents introduce a different operating risk than traditional software. Unlike deterministic applications that execute predefined logic and change only through controlled code releases, AI agents make probabilistic, non-deterministic decisions that evolve through prompts, models, and context, and can act autonomously or semi-autonomously without direct human initiation.

This shift breaks many current assumptions in software governance, testing, and operational controls. Without a dedicated operating model, organizations lack visibility into how decisions are made, how behavior changes over time, and who is accountable when an agent acts unexpectedly.

AgentOps addresses this gap by providing the structure, controls, and governance needed to safely operationalize systems that can think, decide, and act on their own.

This creates new enterprise risks:

- Invisible decision paths

- Prompt drift over time

- Unbounded permissions

- No clear audit trail

- Ethical and compliance exposure

AgentOps exists to manage these risks without killing innovation.

| Traditional Software |

AI Agents |

|

Executes predefined logic |

Makes probabilistic decisions |

|

Deterministic behavior |

Non-deterministic behavior |

|

Changes via code releases |

Changes via prompts, models, and context |

| Human-initiated actions |

Autonomous or semi-autonomous actions |

Six Core Principles of AgentOps

AgentOps is grounded in six core principles that ensure AI agents operate safely, consistently, and responsibly in the enterprise. Trust through Transparency, Governance by Design, Controlled Autonomy, Repeatability over Heroics, Safety before Scale, and most importantly, Continuous Improvement.

1. Trust Through Transparency

If you can’t observe an agent, you can’t trust it. Agents must expose:

- What they did

- Why they did it

- What data they used

- What action they attempted

2. Governance by Design

Governance is not bolted on after deployment. AgentOps embeds:

- Policy enforcement

- Permission boundaries

- Approval workflows

- Ethical constraints

3. Controlled Autonomy

Not all agents deserve full autonomy. AgentOps supports:

- Human-in-the-loop

- Human-on-the-loop

- Fully autonomous (when appropriate)

4. Repeatability Over Heroics

Agents must behave consistently across:

- Environments

- Versions

- Contexts

- None of this nonsense about “it worked last time” AI.

5. Safety Before Scale

Production readiness is earned, not assumed. Agents graduate through environments just like software:

- Sandbox → Test → Staging → Production

6. Continuous Improvement

Agents learn, but enterprises must control how they learn.

The AgentOps Lifecycle

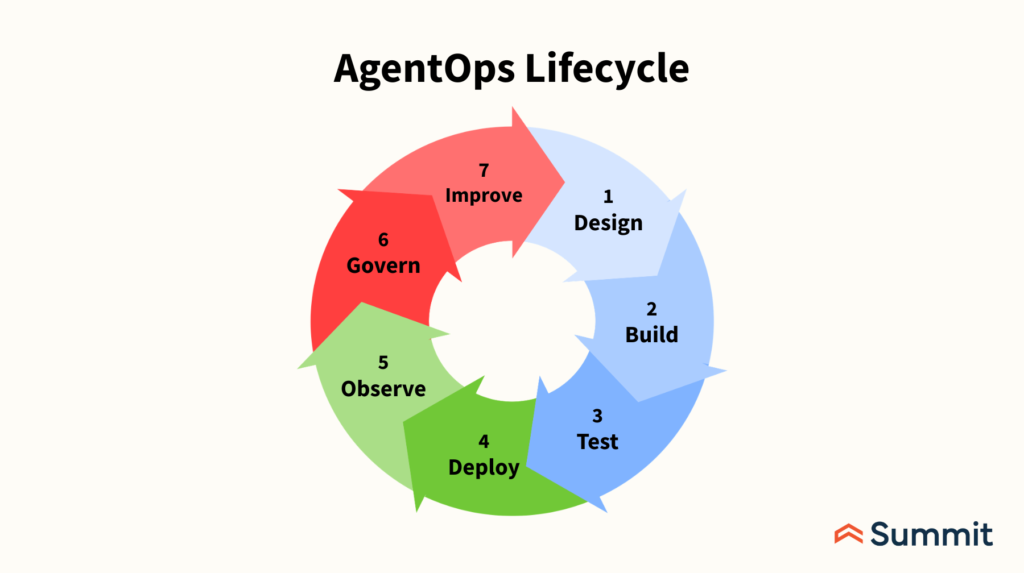

The AgentOps lifecycle mirrors DevOps but is purpose-built for autonomous, decision-making systems, guiding AI agents from concept to continuous improvement.

It begins with design, where the agent’s purpose, scope, allowed actions, ethical boundaries, and success metrics are defined and formalized through an agent charter, action inventory, and risk classification.

During build, prompts, tools, permissions, and context are configured with strong controls, including prompt versioning, action whitelisting, and least-privilege access.

Testing focuses on behavior rather than just outputs, including scenario replay, edge cases, bias and hallucination checks, and negative testing to ensure agents act appropriately under all conditions.

In deployment, agents are released with environment-specific controls, autonomy feature flags, approval thresholds, and rollback mechanisms. Once live, observability ensures action-level logging, decision traceability, performance monitoring, and drift detection.

Governance enforces policies, compliance, ethical standards, and access audits on an ongoing basis, while continuous improvement enables controlled refinement of prompts, policies, scope, and autonomy as trust and maturity increase.

1. Design

- Define agent purpose and scope

- Identify allowed actions

- Establish ethical boundaries

- Define success metrics

Key Outputs

- Agent charter

- Action inventory

- Risk classification

2. Build

- Prompt engineering

- Tool/action configuration

- Role-scoped permissions

- Context and memory rules

Key Controls

- Prompt versioning

- Action whitelisting

- Least-privilege access

3. Test

- Scenario testing

- Edge-case simulation

- Bias and hallucination testing

- Negative testing (“what should it NOT do?”)

AgentOps Testing ≠ QA. You test behavior, not just outputs.

4. Deploy

- Environment-specific controls

- Feature flags for autonomy

- Approval thresholds

- Rollback mechanisms

5. Observe

- Action-level logging

- Decision traceability

- Performance metrics

- Drift detection

6. Govern

- Policy enforcement

- Compliance validation

- Ethical reviews

- Access audits

7. Improve

- Prompt refinement

- Policy updates

- Scope expansion

- Autonomy elevation

Core AgentOps Capabilities

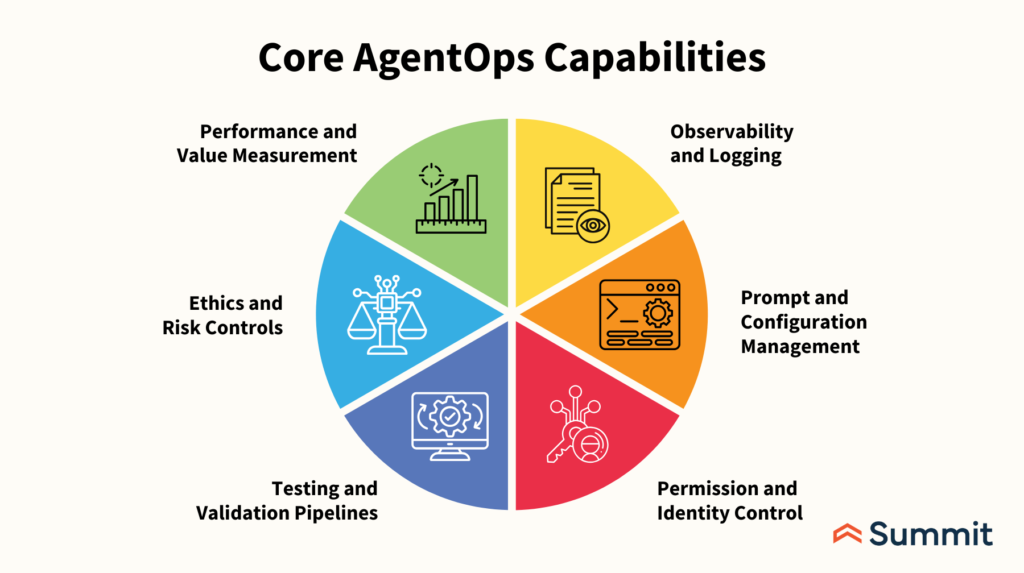

The core AgentOps capabilities, or pillars, establish the controls required to run AI agents as trusted enterprise systems: Observability & Logging, Prompt & Configuration Management, Permission & Identity Control, Testing & Validation Pipelines, Ethics & Risk Controls, Performance & Value Measurement.

1. Observability and Logging

Every agent action must be:

- Logged

- Timestamped

- Attributed

- Replayable

If an agent touches a system of record, it must leave a trail.

2. Prompt & Configuration Management

Prompts are source code. AgentOps requires:

- Version control

- Change approvals

- Rollback support

- Impact analysis

3. Permission & Identity Control

Agents must operate with:

- Role-based permissions

- Scoped actions

- Environment-specific access

No “admin-by-default” agents.

4. Testing & Validation Pipeline

AgentOps introduces Agent CI/CD:

- Behavioral testing

- Scenario replay

- Output validation

- Regression testing

5. Ethics & Risk Controls

AgentOps embeds:

- Bias mitigation

- Data sensitivity rules

- Regulatory constraints

- Human override mechanisms

5. Performance & Value Measurement

Track:

- Agent utilization

- Task success rate

- Error rates

- Business impact

Agents must justify their existence.

6. Performance & Value Measurement

Track:

- Agent utilization

- Task success rate

- Error rates

- Business impact

Agents must justify their existence.

AgentOps Roles and Responsibilities

AgentOps is inherently cross-functional, requiring clear ownership and shared accountability across the organization to succeed. Each AI agent must have an Agent Owner responsible for business outcomes, an Agent Engineer accountable for design and configuration, and an AgentOps Lead overseeing governance, lifecycle management, and operational discipline. Security and Risk teams enforce policies, access controls, and threat mitigation, while Compliance and Legal ensure regulatory and ethical alignment.

When responsibility for AI rests solely with engineering, critical concerns about risk, accountability, and trust are overlooked, causing AgentOps initiatives to stall.

Effective AgentOps depends on aligning business, technology, risk, and compliance as equal stakeholders in how autonomous agents are deployed and managed:

- Agent Owner – Business accountability

- Agent Engineer – Design & configuration

- AgentOps Lead – Governance & lifecycle

- Security & Risk – Policy enforcement

- Compliance & Legal – Regulatory alignment

AgentOps fails when “AI” depends solely on engineering.

AgentOps vs DevOps vs MLOps

AgentOps complements, but does not replace, DevOps and MLOps by addressing a distinct and critical gap created by autonomous systems.

DevOps focuses on reliably shipping and operating software, while MLOps concentrates on training, deploying, and maintaining machine learning models. AgentOps extends these disciplines into the realm of autonomy by governing how AI agents make decisions and take actions in production. It provides the operational, governance, and accountability framework needed when systems move beyond code execution and model inference to independent behavior.

Together, DevOps, MLOps, and AgentOps form a complete operational stack for building, deploying, and safely scaling intelligent, autonomous systems in the enterprise.

| Discipline |

Focus |

|

DevOps |

Shipping software |

|

MLOps |

Training & deploying models |

| AgentOps |

Governing autonomous behavior |

AgentOps does not replace DevOps or MLOps, it extends them into autonomy.

When You Know You Need AgentOps

Organizations know they need AgentOps when AI agents move beyond experimentation and begin interacting with real systems, data, and customers. If agents are capable of taking actions in production, touching financial, customer, or regulated data, or triggering executive questions about accountability when something goes wrong, the absence of an operating model becomes immediately visible.

AgentOps is also essential when risk, security, or compliance teams block AI deployments due to lack of controls, or when promising agent pilots stall and never scale into production. In each of these scenarios, the challenge is not the technology itself but the missing governance, transparency, and accountability that AgentOps is designed to provide.

You need AgentOps if:

- AI agents can take actions in production

- Agents touch customer, financial, or regulated data

- Leaders ask “Who’s accountable if this goes wrong?”

- Risk teams block AI deployment

- Agents stall after pilots

The Goal of AgentOps

The ultimate goal of AgentOps is to transform AI agents from isolated demos and stalled pilots into durable, enterprise-grade systems that deliver measurable value. It enables organizations to move from uncertainty and hesitation to confidence and control, replacing experimentation without structure with governed, scalable adoption.

AI agents are not novelty tools or temporary productivity boosts, they are systems capable of taking meaningful action within the business. And like any system of action, they require operational discipline, oversight, and accountability. AgentOps provides the framework that turns shelfware into trusted, operational infrastructure. AgentOps is how organizations move:

- From demos → durable systems

- From fear → confidence

- From experimentation → enterprise value

AI agents are not toys. They are systems of action. And systems of action demand operations.

The Big Idea: Agentforce Is the Runtime, AgentOps Is the Operating Model

To fully unlock the value of AI inside Salesforce, it’s critical to distinguish between capability and control.

Agentforce provides the technical runtime, handling LLM orchestration, tool invocation, and action execution, while AgentOps establishes the operating model that governs how those agents behave in the real world. In simple terms, Agentforce makes AI agents possible; AgentOps makes them safe, accountable, and ready for production.

Together, they transform autonomous potential into enterprise-grade performance.

Salesforce Agentforce provides:

- The agent runtime

- The LLM orchestration

- The tool/action execution layer

AgentOps defines:

- How agents are governed

- How autonomy is controlled

- How risk is managed

- How trust is earned

Agentforce makes AI agents possible. AgentOps makes them production-ready.

AgentOps Control Plane → Native Salesforce Constructs

Salesforce already provides the control plane AgentOps requires, when it’s used intentionally. Native capabilities like user context, permission sets, Flows, Apex, Event Monitoring, and Shield enforce identity, action mediation, governance, observability, and auditability without additional infrastructure. Kill switches are as simple as revoking permissions or deactivating Flows.

The key principle is straightforward: Agentforce agents should act only through standard Salesforce execution paths, ensuring that all autonomous behavior remains controlled, visible, and auditable.

| AgentOps Control |

Salesforce / Agentforce Capability |

|

Identity & trust |

Salesforce user context, permission sets |

|

Action mediation |

Flow, Apex, invocable actions |

|

Observability |

Event Monitoring, Debug Logs, Shield |

|

Governance |

Profiles, permission sets, policies |

|

Auditability |

Field History, Event Logs, Shield |

| Kill switch |

Permission revocation / Flow deactivation |

Key Insight: Agentforce agents should never act outside standard Salesforce execution paths.

Agent Classification → Agentforce Design Patterns

Effective AgentOps begins with classifying agents by risk and autonomy level, then mapping each tier to the appropriate Agentforce design pattern.

Informational agents remain read-only, assistive agents generate drafts with human approval, operational agents trigger controlled Flows with validation checks, and fully autonomous agents operate through structured combinations of Agentforce, Flow, Apex, and policy enforcement.

The advantage within Salesforce is clear: autonomy can be progressively enforced and governed using native platform capabilities, without requiring custom infrastructure.

| Agent Tier | Agentforce Pattern |

| Tier 0 – Informational | Agentforce Q&A (read-only data) |

| Tier 1 – Assistive | Agent drafts + human approval |

| Tier 2 – Operational | Agent-triggered Flows with checks |

| Tier 3 – Autonomous | Agent + Flow + Apex + policies |

Salesforce advantage: You can enforce autonomy without custom infrastructure.

Agent Chartering → Salesforce Metadata

Every Agentforce agent should be governed by an explicit Agent Charter implemented directly in Salesforce metadata. The agent’s purpose is documented through descriptions and documentation, allowed actions are enforced via invocable Flow or Apex allowlists, prohibited actions are restricted through permission exclusions, risk tiering is captured in custom metadata, and ownership is assigned to a named Salesforce user or role.

Storing Agent Charters as Custom Metadata Types enables versioning, consistency, and centralized governance across environments.

Charter Components in Salesforce:

- Agent purpose → Agent description & documentation

- Allowed actions → Invocable Flow/Apex allowlist

- Prohibited actions → Permission exclusions

- Risk tier → Custom metadata type

- Owner → Named Salesforce user / role

Pro Tip: Store Agent Charters as Custom Metadata Types for versioning and governance.

Prompt = Code → Salesforce Change Management

In AgentOps, prompts are treated as source code, and in Salesforce, that means they must follow formal change management processes. Prompt updates should be version-controlled through DevOps Center or Git, routed through approval workflows and CI pipelines, deployed via metadata management for rollback capability, and validated across sandbox environments to ensure parity.

Updating an Agentforce prompt directly in production without governance is the AI equivalent of editing Apex in production, risky, uncontrolled, and unacceptable in an enterprise setting.

| AgentOps Requirement | Salesforce Mechanism |

| Version control | DevOps Center / Git |

| Approval workflows | Change sets / CI pipelines |

| Rollback | Metadata deployment |

| Environment parity | Sandbox strategy |

Updating an Agentforce prompt in prod without governance is the AI equivalent of editing Apex directly in production.

Permission & Identity Model

In Salesforce, Agentforce agents operate as first-class Salesforce users, making identity and access control central to AgentOps. Each agent must have a dedicated user account with least-privilege permission sets, no shared credentials, and no administrative access. Salesforce enforces this through separate agent profiles, scoped permission sets, field-level security, and object-level CRUD controls.

The governing rule is simple: if an agent can perform an action, it is because Salesforce explicitly permitted it, ensuring all autonomous behavior remains intentional, controlled, and auditable.

AgentOps enforces:

- Dedicated Agent User Accounts

- Least-privilege permission sets

- No shared credentials

- No admin rights

Salesforce Enforcement

- Separate profiles for agents

- Scoped permission sets per agent

- Field-level security

- Object-level CRUD enforcement

If an agent can do something, it’s because Salesforce explicitly allowed it.

Controlled Autonomy → Salesforce Flow Patterns

Controlled autonomy in AgentOps is enforced through Salesforce Flow, not embedded directly in the agent itself. Flows provide the governance layer by applying entry criteria, decision logic, approval steps, confidence thresholds, and human checkpoints before any action is executed.

This enables a maturity-based pattern where agents initially recommend actions that are validated and approved by humans, and over time progress to validated, automated execution with human monitoring. Using Flow ensures autonomy is incremental, auditable, and always reversible.

AgentOps Autonomy Controls in Salesforce

- Entry criteria

- Approval steps

- Decision nodes

- Confidence thresholds

- Human checkpoints

Pattern: Agent recommends → Flow validates → Human approves → System executes

Over time: Agent recommends → Flow validates → System executes → Human monitors

Testing & Validation → Agent CI/CD in Salesforce

AgentOps introduces true Agent CI/CD within Salesforce, where testing focuses on validating behavior rather than just technical syntax. This includes sandbox-based scenario testing, replaying historical records, adversarial prompt testing, and negative case validation to ensure agents act appropriately under real-world conditions.

Using tools such as Salesforce Sandboxes, DevOps Center, Apex test classes, and Flow testing capabilities, organizations can simulate outcomes before production deployment.

The goal is not merely to confirm that code runs, but to verify that autonomous behavior is predictable, controlled, and aligned with business intent.

AgentOps Testing in Salesforce Includes:

- Sandbox-based scenario testing

- Replay of historical records

- Adversarial prompt testing

- Negative case validation

Tooling

- Salesforce Sandboxes

- DevOps Center

- Apex test classes

- Flow tests (where supported)

You are testing behavior, not syntax.

Observability & Audit → Salesforce Shield + Event Monitoring

AgentOps observability aligns naturally with Salesforce’s native monitoring and audit capabilities. Event Monitoring provides detailed action logging, custom objects, and logs capture decision traces, Shield Event Logs track data access, Field History Tracking records changes to records, and Shield Audit Trail supplies compliance evidence.

Together, these tools ensure full transparency into agent behavior.

The governing principle is simple: every agent-triggered action must be clearly distinguishable from a human action, preserving accountability, traceability, and regulatory confidence.

| AgentOps Need | Salesforce Capability |

| Action logging | Event Monitoring |

| Decision traces | Custom objects + logs |

| Data access audit | Shield Event Logs |

| Field changes | Field History Tracking |

| Compliance evidence | Shield Audit Trail |

Key Rule: Every agent-triggered action must be distinguishable from a human action.

Governance, Risk & Ethics → Salesforce Trust Layer

Salesforce already delivers a strong trust foundation through platform-level security, compliance certifications, and data governance controls. AgentOps extends this trust layer by explicitly limiting agent scope, enforcing explainability, and applying ethical and regulatory constraints at execution time rather than relying on intent or guidance alone.

In practice, ethics in Salesforce are enforced through concrete rules, permissions, and policies, not assumptions, ensuring autonomous agents operate within clearly defined and auditable boundaries.

Salesforce already provides:

- Platform-level security

- Compliance certifications

- Data governance controls

AgentOps extends this by:

- Limiting agent scope

- Enforcing explainability

- Applying ethical constraints at execution time

Ethics in Salesforce = Rules, not vibes.

Kill Switches & Incident Response

AgentOps requires the ability to contain risk instantly when something goes wrong. In Salesforce, this is achieved through simple, decisive kill switches such as disabling an agent’s permission set, deactivating related Flows, locking the agent user account, or revoking API access.

These controls allow organizations to halt autonomous behavior within seconds. If an AI agent cannot be shut down immediately and decisively, it is not enterprise-ready.

In Salesforce, kill switches include:

- Disable agent permission set

- Deactivate related Flows

- Lock agent user account

- Revoke API access

If you can’t shut it down in seconds, it’s not enterprise-ready.

Metrics → Salesforce Reporting & Analytics

AgentOps performance and value can be measured natively within Salesforce using standard reporting and analytics capabilities. Key KPIs such as agent-triggered actions, human override frequency, error rates, time saved, and business outcome impact can be tracked through Salesforce Reports, Dashboards, and Event Monitoring analytics.

These metrics provide clear visibility into both effectiveness and risk. If an agent cannot demonstrate measurable return on investment, it should be refined, or retired.

AgentOps KPIs can be tracked natively:

- Agent-triggered actions per day

- Override frequency

- Error rates

- Time saved

- Business outcome impact

Use:

- Salesforce Reports

- Dashboards

- Event Monitoring analytics

If the agent doesn’t show ROI, retire it.

AgentOps Maturity in Salesforce

AgentOps maturity within Salesforce progresses through defined stages, beginning with isolated Agentforce pilots and advancing to controlled Flows with approval mechanisms. As governance strengthens, organizations implement Shield and full auditability, then expand into multi-agent orchestration across processes, and ultimately reach continuous optimization with measurable performance refinement.

Today, most organizations remain at Levels 1–2, experimenting or operating with limited controls, leaving significant opportunity to mature toward scalable, fully governed autonomy.

| Level | Salesforce Reality |

| 1 | Agentforce pilot |

| 2 | Controlled Flows + approvals |

| 3 | Shield + auditability |

| 4 | Multi-agent orchestration |

| 5 | Continuous optimization |

The Strategic Advantage of Salesforce for AgentOps

Salesforce offers a distinct strategic advantage for AgentOps because governance is inherent to the platform rather than an afterthought. Identity management, permission enforcement, auditability, and metadata-driven governance are native capabilities, and AI agents operate directly within the system of record. While other platforms attempt to bolt governance on after deployment, Salesforce enforces it by default, making it uniquely suited for running autonomous agents in a controlled, enterprise-ready manner.

Salesforce is uniquely positioned because:

- Identity is native

- Permissions are enforced

- Auditability is built-in

- Governance is metadata-driven

- AI runs inside the system of record

Other platforms bolt governance on. Salesforce enforces it by default.

The Bottom Line

The bottom line is clear: Agentforce combined with AgentOps transforms AI agents from experimental features into governed, auditable, and trusted enterprise assets.

Without AgentOps, Agentforce remains a compelling demo with limited production viability. With AgentOps in place, it becomes a true platform for autonomous business execution, capable of delivering scalable value while maintaining accountability, control, and trust.

Agentforce + AgentOps turns AI agents into:

- Governed systems

- Auditable actors

- Trusted operators

- Enterprise assets

Without AgentOps, Agentforce is a demo. With AgentOps, it’s a platform for autonomous business execution.

Ultimately, AgentOps is about enabling success in an environment where ai agents are not just tools, but active participants in business processes. By investing in AgentOps, companies can reduce risk, improve system resilience, and maximize the return on their ai investments.

As the complexity of ai systems grows, so too does the importance of AgentOps—making it a critical capability for any organization seeking to lead in the era of autonomous ai agents.

Bring AgentOps and Agentforce to Production With Summit

If you’re exploring Salesforce Agentforce, the fastest path to real value is to pair the platform’s capabilities with an operating model built for autonomy. That’s exactly where Summit can help: we connect Salesforce AI strategy, data foundations, security, and delivery discipline so your agents earn trust — and scale.

How Summit helps operationalize Agentforce with AgentOps:

- Define the Agent Charter

- Design governance guardrails

- Build and launch the first production-ready agents

- Stand up observability and reporting

- Create a scalable roadmap

Learn more about our AI Agentforce Quickstart and how we can help with our Salesforce AI Advisory Services.

Ready to move beyond AI pilots? Let’s align on where AI agents and Agentforce can have the greatest business impact, then implement the controls that make leadership comfortable about putting agents into production. Contact us today to discuss your AI initiative.

Agentforce is the runtime. AgentOps is the discipline. Summit is the partner that helps you make it real.

AgentOps for Salesforce Agentforce FAQs

What is AgentOps in simple terms?

AgentOps is the operating discipline that ensures AI agents are safe, accountable, observable, and scalable in the enterprise. It defines how agents are governed throughout their lifecycle, enabling them to act autonomously without introducing unacceptable risk.